Adaptador InfiniBand NVIDIA ConnectX-6 MCX653106A-ECAT 200Gb/s Smart NIC

Detalhes do produto:

| Marca: | Mellanox |

| Número do modelo: | MCX653106A-ECAT |

| Documento: | connectx-6-infiniband.pdf |

Condições de Pagamento e Envio:

| Quantidade de ordem mínima: | 1 unidade |

|---|---|

| Preço: | Negotiate |

| Detalhes da embalagem: | Caixa externa |

| Tempo de entrega: | Baseado no inventário |

| Termos de pagamento: | T/T |

| Habilidade da fonte: | Fornecimento por projeto/lote |

|

Informação detalhada |

|||

| Status dos produtos: | Estoque | Aplicativo: | Servidor |

|---|---|---|---|

| Tipo de interface:: | Infiniband | Portas: | Dual |

| Velocidade máxima: | 100GBE | Tipo: | Com fio |

| Doença: | Novo e Original | Tempo de garantia: | 1 ano |

| Modelo: | MCX653106A-ECAT | Nome: | Cartão de rede Mellanox Original MCX653106A-ECAT conectar X-6 100Gb/s dual-port QSFP56 Ethernet Adap |

| Palavra-chave: | Cartão de rede Mellanox | ||

| Destacar: | NVIDIA ConnectX-6 Adaptador de banda Infini,Smart NIC 200Gb/s,Cartão de rede Mellanox com garantia |

||

Descrição de produto

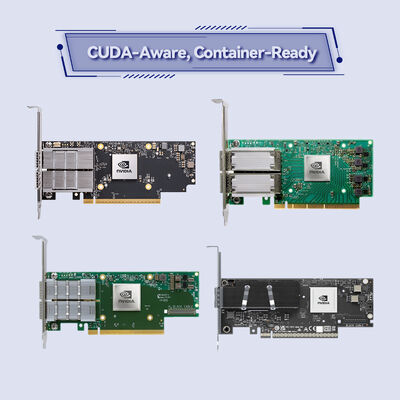

Adaptador Inteligente de Porta Dupla de 200 Gb/s com Computação na Rede

O NVIDIA ConnectX-6 MCX653106A-ECAT oferece até200 Gb/sde largura de banda, latência sub-microsegundo e offloads de hardware para HPC, IA e armazenamento hiperconvergente. Apresentando RDMA, aceleração NVMe-oF, criptografia XTS-AES em nível de bloco e PCIe 4.0, este adaptador InfiniBand QSFP56 de porta dupla maximiza a eficiência e escalabilidade do data center. Ideal para clusters de GPU, treinamento de ML e redes de missão crítica.

OMCX653106A-ECATfaz parte da família de adaptadores InfiniBand NVIDIA ConnectX-6, projetada para as cargas de trabalho mais exigentes. Ele combina duas portas QSFP56 capazes de conectividade InfiniBand de 200 Gb/s ou Ethernet de 200 Gb/s, oferecendo transporte confiável baseado em hardware, controle de congestionamento e motores de Computação na Rede. Ao descarregar operações coletivas, correspondência de tags MPI e criptografia da CPU do host, o adaptador reduz a sobrecarga da CPU e aumenta o desempenho do aplicativo em clusters de grande escala. Empresas, laboratórios de pesquisa e data centers hiperscaláveis confiam no ConnectX-6 para construir redes eficientes em energia e de baixa latência.

Até 200 Gb/s por porta (InfiniBand HDR / 200GbE)

Até 215 milhões de mensagens/seg

XTS-AES de 256/512 bits em nível de bloco, compatível com FIPS

Offloads coletivos, offloads de destino/iniciador NVMe-oF

PCIe Gen 4.0/3.0 x16 (suporte de porta dupla)

SR-IOV até 1K VFs, ASAP2, offload Open vSwitch

Suporte RoCE, XRC, DCT, paginação sob demanda, roteamento adaptativo

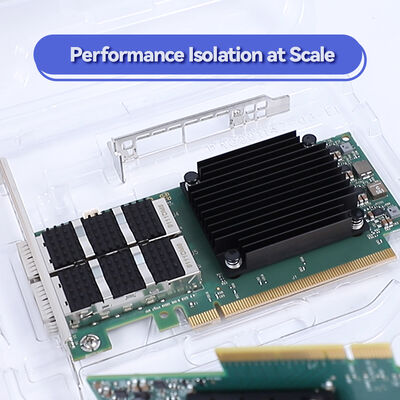

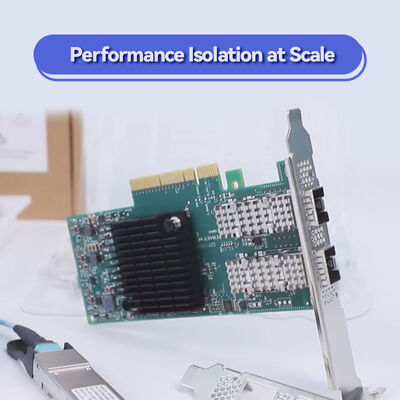

PCIe stand-up (low-profile), QSFP56 de porta dupla

Construído na arquitetura InfiniBand comprovada da NVIDIA, o ConnectX-6 integraComputação na Redepara acelerar operações MPI, frameworks de deep learning e protocolos de armazenamento. O adaptador suportaRemote Direct Memory Access (RDMA)para transferências de dados sem cópia, contornando a CPU e o kernel. O controle de congestionamento baseado em hardware garante desempenho previsível mesmo sob carga pesada. Além disso,NVIDIA GPUDirect RDMApermite a troca direta de dados entre a memória da GPU e o adaptador de rede, reduzindo drasticamente a latência para treinamento de IA. Com suporte paraNVMe over Fabrics (NVMe-oF)offloads, a placa reduz a utilização da CPU em arrays de armazenamento, permitindo acesso de alta taxa de transferência e baixa latência ao flash NVMe.

- Computação de Alto Desempenho (HPC):Simulações em larga escala, modelagem climática e dinâmica de fluidos computacional que exigem baixa latência e alta largura de banda.

- Clusters de IA e Machine Learning:Treinamento distribuído de redes neurais profundas, aproveitando GPUDirect e RDMA para máxima eficiência.

- Sistemas de Armazenamento NVMe-oF:Offloads de destino ou iniciador permitem armazenamento desagregado de alto desempenho com baixa sobrecarga de CPU.

- Data Centers Hiperscaláveis:Ambientes virtualizados com SR-IOV, redes overlay e encadeamento de serviços.

- Serviços Financeiros:Infraestrutura de negociação de latência ultrabaixa que requer desempenho determinístico.

O ConnectX-6 MCX653106A-ECAT é compatível com uma ampla gama de servidores, switches e sistemas operacionais. Ele interopera com switches InfiniBand NVIDIA Quantum (HDR 200 Gb/s), bem como switches Ethernet 200GbE. O adaptador suporta slots PCIe padrão (x16, x8, x4) e inclui suporte de driver para as principais plataformas de SO.

| Parâmetro | Especificação |

|---|---|

| Modelo do Produto | MCX653106A-ECAT |

| Taxa de Dados | 200 Gb/s, 100 Gb/s, 50 Gb/s, 40 Gb/s, 25 Gb/s, 10 Gb/s, 1 Gb/s (InfiniBand e Ethernet) |

| Portas | 2x conectores QSFP56 |

| Interface do Host | PCIe Gen 4.0 / 3.0 x16 (suporta configurações x8, x4, x2, x1) |

| Latência | Extremamente baixa sub-microsegundo (típica<0.8µs) |

| Taxa de Mensagens | Até 215 milhões de mensagens/seg |

| Criptografia | XTS-AES 256/512 bits, pronto para conformidade FIPS 140-2 |

| Fator de Forma | PCIe low-profile stand-up (suporte alto montado, suporte curto incluído) |

| Dimensões (sem suporte) | 167,65 mm x 68,90 mm |

| Consumo de Energia | Típico 22W (dependendo do tráfego) |

| Virtualização | SR-IOV (1K VFs), VMware NetQueue, NPAR, offload de fluxo ASAP2 |

| Gerenciamento | NC-SI, MCTP sobre PCIe/SMBus, PLDM para atualização e monitoramento de firmware |

| Inicialização Remota | InfiniBand, iSCSI, PXE, UEFI |

| Sistemas Operacionais | RHEL, SLES, Ubuntu, Windows Server, FreeBSD, VMware vSphere, pilha OFED |

| Número da Peça de Pedido (OPN) | Portas | Velocidade Máxima | Interface do Host | Diferencial Chave |

|---|---|---|---|---|

| MCX653106A-ECAT | 2x QSFP56 | 100 Gb/s (também inferior) | PCIe 3.0/4.0 x16 | 100GbE/IB de porta dupla, offloads avançados, criptografia opcional? Sem criptografia integrada nesta variante, mas suporta criptografia em nível de bloco via software? Na verdade, motor AES de hardware, consulte a especificação; ideal para virtualização e armazenamento |

| MCX653105A-HDAT | 1x QSFP56 | 200 Gb/s | PCIe 3.0/4.0 x16 | 200 Gb/s de porta única, suporte a criptografia |

| MCX653106A-HDAT | 2x QSFP56 | 200 Gb/s | PCIe 3.0/4.0 x16 | Largura de banda total de 200 Gb/s de porta dupla, offload de criptografia |

| MCX653105A-ECAT | 1x QSFP56 | 100 Gb/s | PCIe x16 | 100 Gb/s de porta única, entrada de menor custo |

| MCX653436A-HDAT (OCP 3.0) | 2x QSFP56 | 200 Gb/s | PCIe 3.0/4.0 x16 | Fator de forma pequeno OCP 3.0, porta dupla |

- Desempenho Máximo do Aplicativo:Offloads de hardware para MPI, NVMe-oF e criptografia liberam núcleos de CPU para cargas de trabalho reais.

- Largura de Banda Preparada para o Futuro:PCIe 4.0 e prontidão de 200 Gb/s garantem longevidade em redes de alta velocidade.

- Memória e Computação na Rede:Suporta offloads coletivos e buffer de rajada, reduzindo a sobrecarga de movimentação de dados.

- Segurança Confiável:Criptografia AES-XTS em nível de bloco com conformidade FIPS garante proteção de dados em repouso e em trânsito sem impacto no desempenho.

- Gerenciamento Simplificado:Amplo suporte a SO e hipervisor, com pilha de driver unificada (OFED, WinOF-2).

A Hong Kong Starsurge Group fornece suporte técnico completo, cobertura de garantia e serviços RMA para todos os adaptadores NVIDIA ConnectX. Nossa equipe de engenheiros de rede auxilia na configuração, atualizações de firmware e ajuste de desempenho. Oferecemos frete global, preços em volume para projetos de data center e reservas de estoque personalizadas. Para pedidos em volume, entre em contato com nossa equipe de vendas para receber cotações personalizadas e detalhes de prazo de entrega.

• Confirme se o slot PCIe fornece energia adequada (até 75W via slot; este adaptador normalmente usa<25W).

• Para plataformas com resfriamento líquido, verifique a compatibilidade com o Intel Server System D50TNP se uma variante de placa fria for necessária (esta OPN é resfriada a ar padrão).

• Verifique a compatibilidade do driver do SO com as pilhas OFED ou WinOF-2 mais recentes.

Desde 2008, a Hong Kong Starsurge Group Co., Limited fornece hardware de rede de nível empresarial, integração de sistemas e serviços de TI em todo o mundo. Como parceira confiável para produtos de rede NVIDIA, a Starsurge oferece soluções certificadas para data centers governamentais, financeiros, de saúde, educacionais e hiperscaláveis. Nossa equipe técnica garante uma implantação tranquila, desde o design da arquitetura pré-vendas até o suporte pós-vendas. Com uma filosofia centrada no cliente, fornecemos componentes de infraestrutura personalizados e escaláveis, incluindo NICs, switches, cabos e soluções de rede ponta a ponta.

Entrega global · Suporte multilíngue · Serviços OEM disponíveis

| Componente / Ecossistema | Suportado | Notas |

|---|---|---|

| Switches NVIDIA Quantum HDR | ✓ Sim | Integração completa de fabric de 200 Gb/s |

| Switches Ethernet 200G/100G | ✓ Sim | Requer transceptor/modos FEC compatíveis |

| GPU Direct RDMA | ✓ Sim | Série de GPUs NVIDIA suportada |

| VMware vSphere / ESXi | ✓ Certificado | Drivers nativos, suporte SR-IOV |

| Windows Server 2019/2022 | ✓ Sim | Pacote de driver WinOF-2 |

| Kernel Linux e OFED | ✓ Suporte total | MLNX_OFED, drivers inbox |

- Confirme a velocidade de link necessária: a porta dupla de 100 Gb/s atende ao plano de largura de banda do seu cluster? Para porta dupla de 200 Gb/s, considere a OPN -HDAT.

- Verifique o slot PCIe do servidor: físico x16, Gen 3 ou Gen 4 recomendado.

- Verifique o tipo de cabo: cobre passivo QSFP56 (até 5m) ou cabos ópticos ativos para maior alcance.

- Certifique-se de que os drivers do sistema operacional estejam disponíveis (OFED, WinOF).

- Para requisitos de criptografia: confirme se a criptografia em nível de bloco integrada é necessária – o MCX653106A-ECAT suporta AES-XTS, mas sempre confirme o nível FIPS com a folha de dados da NVIDIA.

- Avalie as necessidades de virtualização: SR-IOV, offload VXLAN, etc.