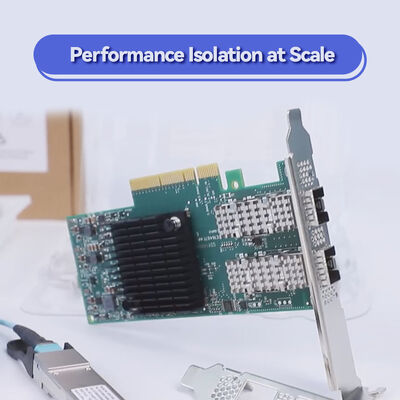

Mellanox ConnectX-5 MCX516A-CCAT 100GbE Dual-Port QSFP28 Adapter Card

Detalhes do produto:

| Marca: | Mellanox |

| Número do modelo: | MCX516A-CCAT |

| Documento: | connectx-5-en-card.pdf |

Condições de Pagamento e Envio:

| Quantidade de ordem mínima: | 1PCS |

|---|---|

| Preço: | Negotiate |

| Detalhes da embalagem: | Caixa externa |

| Tempo de entrega: | Baseado no inventário |

| Termos de pagamento: | T/T |

| Habilidade da fonte: | Fornecimento por projeto/lote |

|

Informação detalhada |

|||

| Status dos produtos: | Estoque | Aplicativo: | Servidor |

|---|---|---|---|

| Tipo de interface:: | Rede | Portas: | Dual |

| Velocidade máxima: | 25GBE | Tipo de conector: | SFP28 |

| tipo: | Com fio | Doença: | NOVO E ORIGINAL |

| Tempo de garantia: | 1 ano | Modelo: | MCX516A-CCAT |

| Nome: | Cartão de rede Mellanox CX516A CONNECTX-5 100GBE MCX516A-CCAT DUAL-PORT QSFP28 Adaptador PCI-E | palavra-chave: | Cartão de rede Mellanox |

| Destacar: | Mellanox ConnectX-5 100GbE network card,Dual-port QSFP28 adapter card,Mellanox MCX516A-CCAT server adapter |

||

Descrição de produto

Mellanox ConnectX-5 MCX516A-CCAT 100GbE Dual-Port QSFP28 Adapter Card

High-Performance Ethernet Adapter Card

Dual-Port 100GbE QSFP28 | PCIe 3.0 x16 | Low-Profile | RDMA & RoCE | Smart NIC for HPC, AI, Cloud & Storage

Up to 100Gb/s per port • 750ns ultra-low latency • 200 million messages per second • Hardware offloads for NVMe-oF, OvS, T10-DIF • PCIe 3.0 x16 with embedded switch • RoCE for overlay networks

Product Overview

The Mellanox ConnectX-5 EN MCX516A-CCAT is a high-performance dual-port 100GbE Ethernet adapter card designed for bandwidth-intensive data center workloads including HPC clusters, AI training fabrics, cloud data centers, and software-defined storage. Built on the intelligent ConnectX-5 architecture, this PCIe 3.0 x16 low-profile card delivers ultra-low latency (750ns), high message rate (200 Mpps), and comprehensive hardware offloads that reduce CPU overhead while accelerating virtual switching, NVMe over Fabric, and overlay network processing.

As part of the ConnectX-5 EN series, the MCX516A-CCAT features QSFP28 connectors, supports multiple speeds (100GbE, 50GbE, 40GbE, 25GbE, 10GbE, 1GbE), and enables RoCE (RDMA over Converged Ethernet) for efficient remote direct memory access. With ASAP² (Accelerated Switching and Packet Processing) technology, it offloads Open vSwitch (OvS) data-plane tasks, freeing server resources for application workloads.

Key Features

- Ultra-High Bandwidth: Dual-port 100GbE, aggregate 200Gb/s, PCIe 3.0 x16 interface, 750ns latency, 200M messages per second

- Advanced Offloads: OvS/vRouter offload (ASAP²), NVMe-oF target offload, T10-DIF Signature Handover, RoCE for overlay networks

- Virtualization & I/O: SR-IOV (up to 512 VFs), embedded PCIe switch, header rewrite, NAT hardware offload, flexible match-action tables

- Overlay Networking: Hardware encapsulation/decapsulation for VXLAN, NVGRE, GENEVE, MPLS, NSH -- stateless offloads for SDN environments

- Form Factor & Management: Low-profile PCIe 3.0 x16, NC-SI over MCTP, PLDM monitoring & firmware update, UEFI/PXE/iSCSI remote boot

Technology Deep Dive

Mellanox ASAP² (Accelerated Switching & Packet Processing) -- the MCX516A-CCAT offloads the entire vSwitch/vRouter data path to hardware without modifying the control plane. Flexible match-action tables and a programmable parser enable hardware offloads for current and future protocols, delivering wire-speed packet processing with near-zero CPU load.

RDMA over Converged Ethernet (RoCE) provides low-latency, CPU-bypass data transfers essential for distributed storage, HPC, and AI training. ConnectX-5 adds adaptive routing on reliable transport, out-of-order RDMA support, and burst buffer offloads for background checkpointing.

Embedded PCIe Switch -- the internal switch allows host chaining, elimination of backend ToR switches for storage appliances, and bifurcation capabilities, significantly reducing TCO for rack-scale designs.

NVMe over Fabric (NVMe-oF) Target Offloads minimize CPU intervention for remote NVMe access, delivering high-performance block storage with reduced latency and increased IOPS.

Typical Deployments

- High-Performance Computing (HPC): MPI tag matching, rendezvous offloads, low-latency interconnects for simulation and modeling

- AI & Machine Learning Clusters: GPU Direct RDMA (PeerDirect) communication acceleration for distributed training

- Cloud & Web 2.0 Data Centers: High-density virtualization, SDN environments with VXLAN/GENEVE overlays, OvS offload for NFV

- Software-Defined Storage: NVMe-oF target acceleration, distributed file systems (Ceph, Lustre) leveraging RoCE and iSER

- Telecom & 5G: Ultra-low latency for vEPC, vIMS, and user plane functions (UPF) with hardware service chaining

Technical Specifications

| Category | Specification |

|---|---|

| Product Model | MCX516A-CCAT |

| Product Series | Mellanox ConnectX-5 EN |

| Port Speed & Type | 2x 100GbE QSFP28 (also supports 50/40/25/10/1GbE) |

| Host Interface | PCIe 3.0 x16 (compatible with x16 slots only, auto-negotiates lower lanes) |

| Form Factor | Low-profile PCIe stand-up (tall bracket pre-installed, short bracket accessory) |

| Latency | 750ns (typical) |

| Message Rate | Up to 200 million messages per second (Mpps) |

| RDMA Support | RoCE, RoCE for overlay networks, XRC, DCT, out-of-order RDMA, Adaptive Routing |

| Storage Offloads | NVMe-oF target offload, T10-DIF (Signature Handover), SRP, iSER, NFS RDMA |

| Virtualization | SR-IOV: up to 512 VFs, 8 PFs per host, address translation, QoS per VM |

| Management Interfaces | NC-SI over MCTP (SMBus & PCIe), PLDM, I2C, SPI Flash, JTAG |

| Remote Boot | PXE, iSCSI remote boot, UEFI |

| Dimensions | Low-profile (2.71" x 6.6") |

| RoHS & Certifications | RoHS compliant, ODCC compatible |

100GbEper port

750nslatency

200MMpps

Compatibility

Operating Systems & Stacks: RHEL/CentOS, Windows Server, FreeBSD, VMware ESXi, OpenFabrics Enterprise Distribution (OFED), WinOF-2, DPDK

Server Interfaces: PCIe 3.0 x16 (auto-negotiates to x8, x4, x2, x1), supports MSI/MSI-X, AER, ACS, ATS, PASID, IBM CAPI v2

Connectors & Cables: Dual QSFP28 cages, passive copper DACs, active optical cables, and transceivers (100GBASE-SR4, LR4, etc.). Backward compatible with 50/40/25/10/1GbE speeds

Ethernet Standards: IEEE 802.3cd (50/100/200GbE), 802.3bj/802.3bm (100GbE), 802.3by (25/50GbE), PFC, ETS, DCBX, IEEE 1588v2

Why Choose MCX516A-CCAT & Starsurge?

- Mellanox Proven Silicon: Market-leading RDMA, ASAP² offloads, and ultra-low latency for mission-critical HPC/AI workloads

- Future-Ready 100GbE: Dual-port density with speed flexibility (100/50/40/25/10/1GbE) protects infrastructure investments

- Hardware-Accelerated Storage & Networking: NVMe-oF target offload and T10-DIF reduce CPU load for storage nodes

- Global Supply & Technical Support: Hong Kong Starsurge Group stocks genuine Mellanox adapters, provides pre-sales compatibility check, and after-sales engineering assistance

- Seamless Integration: Works with all major hypervisors, DPDK, and accelerated networking frameworks

About Hong Kong Starsurge Group

Founded in 2008, Hong Kong Starsurge Group Co., Limited is a technology-driven provider of network hardware, IT services, and system integration solutions. Serving global clients in government, healthcare, manufacturing, education, finance, and enterprise, Starsurge supplies network switches, NICs, wireless access points, controllers, cables, and IoT solutions.

As a trusted partner for NVIDIA Networking / Mellanox products, Starsurge ensures genuine hardware, competitive pricing, and responsive technical support for data center upgrades, HPC cluster deployments, and storage modernization.

Deseja saber mais detalhes sobre este produto